I’ve worked with lots of brilliant content creators in my career, and one thing I’ve always hated is having to tell somebody that something they want is ‘impossible’. Worse than that though, is when I have to just declare it is impossible ‘for complicated programmer reasons’ rather than explain myself. This is something I’ve tried hard to avoid over the years, as it is always better when everybody on a team understands the tools and limitations they are working with.

Unfortunately, transparency comes up over and over again as an ‘impossible thing to do well for technical reasons I find it hard to explain’. It is a great effect that artists would always love to have, but breaks in all sorts of really annoying ways that are genuinely not possible to solve on current hardware.

So, this post is my attempt at clearly and concisely explaining, once and for all, why transparency is so hard without resorting to ‘for technical reasons’…

Rasterizing

To understand transparency, a little background in how scenes are actually rendered is needed. There are 2 general approaches to rendering:

- Ray tracing: Draws a scene by firing rays through the scene to simulate how light bounces around.

- Rasterizing: Draws a scene by taking a large number of primitives (normally triangles) and filling in the pixels on the screen that each primitive covers.

Methods of ray tracing range from the ‘too expensive to do in real time’ up to ‘way way way too expensive to do in real time’, and despite recent advances in GPUs (especially the new RTX cards), we aren’t going to be rendering entire game scenes this way for a few years yet.

So we’re left with rasterizing. A technique that in many ways is a beautiful hack. It is well suited to hardware, as it involves doing a few very simple tasks many many times over. Work out what pixels a primitive covers, then fill in each one.

Unfortunately, the massive gains in speed and simplicity we get with rasterizing come at a cost. As we are no longer truly simulating the behaviour of light, physical effects like shadows don’t just ‘fall out’ naturally – instead clever people had to work out how to bodge shadow effects into rasterized scenes, and they did it very well!

Depth

The first problem you hit with rasterizing is how to handle the situation in which 2 primitives cover the same pixel. Clearly you want the one in front to be visible, however unlike ray tracing, a rasterizer has to do extra work to make this happen.

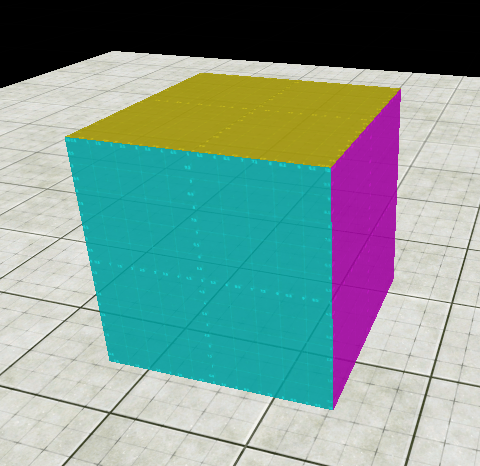

Let’s start with this lovely simple spinning cube…

Looks great, but if we turn off the rasterizer features that handle depth, weird shit instantly happens…

Here you can see that sometimes the back faces of the cube seem to drawing over the front faces. This is because the graphics card doesn’t attempt to sort the triangles of the cube – all it knows is you’ve given it a bunch of triangles you want on screen as soon as possible. So, if it happens to draw the front faces first, and the back faces last, the back faces get drawn on top.

Fortunately, with the simple cube there is a simple solution – only draw the triangles that are facing the camera, and discard the others. This is known as back face culling.

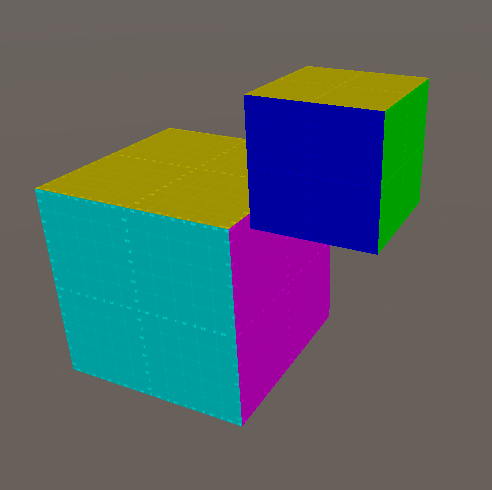

But what if we have 2 cubes?

Here culling won’t necessesarilly help us. We have multiple front facing triangles (such as the blue on the rear cube and the pink on the front cube) that overlap on screen. In the above image we happen to be drawing stuff in the right order, but if we flip the order around…

Because the rear cube was drawn last it simply stomped on top of the front cube.

Again however, this one can be solved pretty easily. The cubes themselves aren’t actually overlapping, so by simply sorting the objects to render, and then ensuring we always draw starting from the back and working forwards, everything just works. This is known as the painters algorithm.

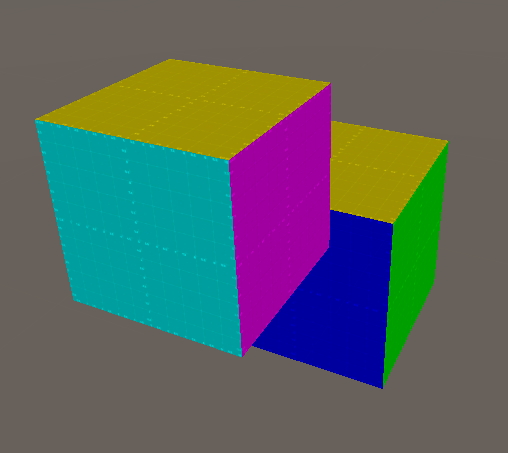

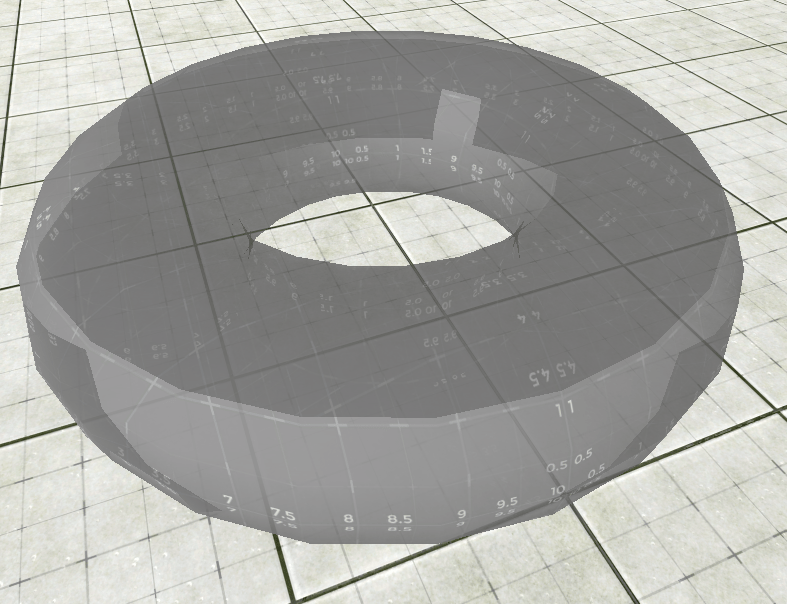

Unfortunately, there is another case that neither culling nor sorting can solve:

With only culling and sorting, we can either end up with this…

Or this…

And problems like this one don’t need some contrived situation with overlapping cubes, any concave mesh, which turns out to be most models, is going to suffer this sort of problem:

It is solved with the use of a depth buffer and depth testing. These techniques underpin modern rasterizing. The basic idea is that in addition to rendering your pixel colours you also render how far each pixel is from the camera, aka its depth.

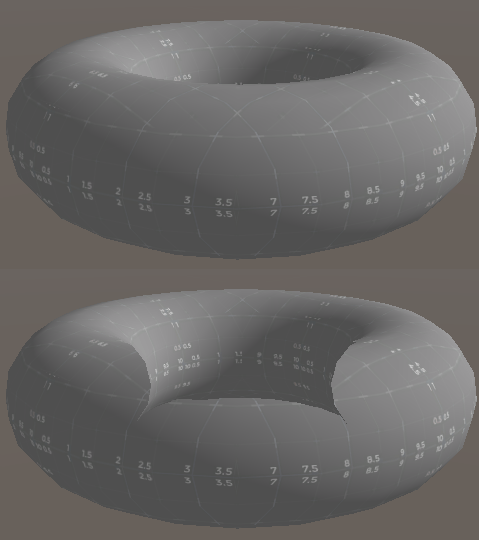

Here we visualise the depth buffer for the torus. Red is close to the camera, blue is further away.

And again for the 2 cubes…

Using this additional information the rasterizer is able to check, before filling in a pixel, if it has already been filled in by another, closer triangle.

The depth buffer actually removes the need for sorting entirely. Indeed, in many renderers it is common to flip the sorting around and render front objects first, so they fill in the depth buffer as quickly as possible. That way the GPU doesn’t end up running expensive shaders for background objects only to have the results trampled later by foreground objects.

Transparency

Finally on to actual transparency! The big problem with transparency comes down to 2 points:

- Transparent objects must be drawn back-to-front for the effect to look correct (as we will see imminently)

- We have just proven above that it is not always possible to reliably draw objects back-to-front.

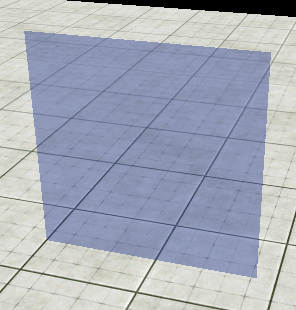

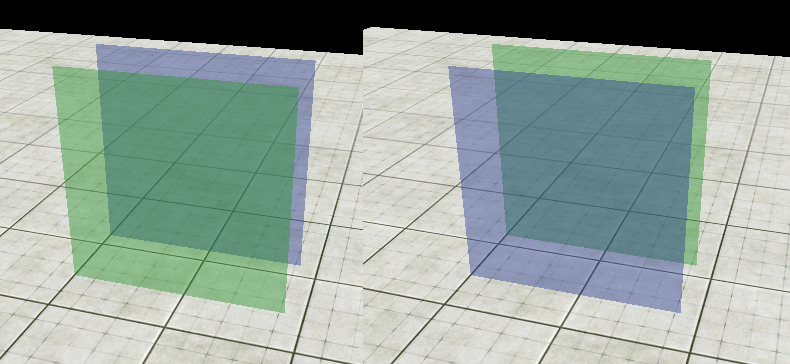

Let’s start with a simple quad, which in a game might easily be a nice flat glass window:

Lovely! But what if we want a green pane of glass as well?

Here you see the same scene, however on the left the green glass is in front, and on the right the blue glass is in front. The thing to note is that the central overlapping section is different in each image. Just to prove it, here they are sliced together:

This is because, as mentioned earlier, to colour a transparent pixel correctly, you must know the colour of whatever is behind it. This means that if you have multiple transparent objects, the draw order is very important.

Fortunately, the above situation is actually pretty easy to deal with by sorting, and indeed this is why many games comfortably have semi transparent glass windows

But what if we make our cube slightly transparent? How should it look? A first blast with all the depth testing and culling turned on would look like this:

This, however is pretty clearly not a cube made of transparent material, as we can’t see the back faces at all. It is simply a faded version of the original opaque cube. This is because all the work we did to get the opaque cube rendering correctly with culling and depth tests has hidden the back faces entirely. To see them, the culling and depth tests must be turned off:

Yay! So what’s the problem? It’s right back to where we started, as shown in this video:

If we’re lucky enough to draw the faces in the correct order, the cube looks right. However if they’re in the wrong order, the back faces trample the front, and the effect breaks.

Just as before, in the case of the cube there are some clever tricks we could do to get it working. In practice, you could first render all the back faces, then render all the front faces. However just like before, this technique falls apart when more complex or overlapping objects come into play:

Bleh!

So how do we solve this?

Sadly, right now, the truth is we really don’t, or at least can’t do it anywhere near fast enough 😦 Rasterizer based hardware simply doesn’t function in a transparency friendly way. For opaque objects, provided there are no bugs in shaders, we can guarantee they will always look correct, regardless of the scene. However for transparent objects, we must make scenes carefully constructed to hide transparency artefacts.

A common bug might be a semi-transparent object held by the player which looks fine until they pass their hand through some other semi transparent object such as a window or smoke. In this situation, at some point, the hand is going to trample the smoke, or the smoke is going to trample the hand. Something is going to break!

The classic glitch, familiar to anyone who’s been in games long enough, looks like this:

Note how the opaque cube (right) interacts correctly with the transparent green pane of glass. However when the transparent cube interacts with the transparent glass, it almost seems to ‘pop’ behind it.

Once we finally get to truly ray traced games, this issue will disappear along with host of others. A ray tracer ultimately simulates the physics of light, so stuff like transparency just works. However for now, we’re stuck with rasterizers and grumpy programmers!

So there you have it! My long winded but hopefully helpful to somebody explanation of exactly why transparency is a total PITA and why programmers typically grumble about technical reasons etc etc when anybody mentions something like transparent curtains…

I’d like to understand what the slow rasterization solution is at the moment even if it’s not viable for real time. These issues are apparently addressable on desktop gpu’s, but I’m wondering what alternate approach might be needed for a tile deferred gpu and what makes these things expensive.

LikeLike

There have been various proposals over the years. There’s no core system (that I know of) to address this on desktop gpus (other than ray tracing or burning lots of cycles to get very specific situations to work). The last genuine one I read (from the HPG conference) was effectively to do deferred rendering, but with an array of values in a compute buffer per pixel instead that recorded all the surfaces that had written to it. Then a final pass would sort the surfaces per pixel and perform the necessary math to combine them all.

LikeLike