Fairly recently I started researching the use of deterministic networking in a Unity game. With this technique, all users simply send each other the buttons they press on the controllers, and by running exactly the same simulation, experience exactly the same gameplay. This approach to networking is less common than the standard ‘keep the stuff that matters roughly in sync’ approach, but is very useful (and indeed necessary) if you have a game that relies on AI or physics doing exactly the same thing for everybody.

After a bit of research, I was surprised to discover that the internet is littered with inaccurate and often entirely incorrect information around determinism. Whilst I’ve not had to solve these problems with Unity before, I do have a lot of experience in this area. Little Big Planet had deterministic networking, and on No Man’s Sky I helped ensure the procedural generation was both deterministic and consistent between PC, PS4 and even the PS4 GPU.

In this series I’ll try to clear up some of the confusions and misnomers, starting with a quick review of determinism and how to test it. Then we’ll cover an area that quite unfairly but most often gets the blame – floating point numbers. Later, I intend to cover some of the other big and more common culprits, and finish off with some digging into Unity specific problems.

What Actually Is Determinism

A deterministic system can be defined by a very simple statement:

If a system is run multiple times with the same set of inputs, it should always generate the same set of outputs.

In computer terms, this means that if we provide a program with some given input data, we should be able to run that code as many times as we like, and always expect the same results. And by the same, I mean exactly the same – the same bytes, with the same bits, in the same order. In technical terms, bitwise identical.

A couple of examples:

- If I write a function that adds the numbers 2 and 3, it should always output 5 no matter how many times I call it.

- If I write an entire physics engine and provide it with 10000 rigid bodies all starting with a given position/orientation, and run it for 2 years, it should always result in the same set of new positions/orientations no matter how many times I run it.

Computers are in fact inherently deterministic – that’s simply how processors work. If the add instruction on an i9 Processor could take 2 values and sometimes output slightly different results, it would be defined as a broken PC, and you’d probably be needing to replace it soon!

So where does indeterminism come from, and why does it seem so prevalent when computers are naturally deterministic. Well, the reality is defining the inputs of a system is not always as simple as it sounds.

Testing Determinism

Before investigating it, we should come up with a good solid way of testing the determinism of our code. Fortunately, when I joined Media Molecule, the very clever team had already solved this problem, so here’s how…

public static class SyncTest

{

static StreamWriter m_stream;

//begin just opens a file to write to

public static void Begin(string filename)

{

m_stream = new StreamWriter(filename);

}

//simple function that just writes a line with some text to the stream

public static void Write(string description)

{

m_stream.Write(string.Format("{0}\n", description));

}

//writes a line with a description, an integer value, and the exact bytes

//that make up the integer value, all padded nicely with spaces.

public static void Write(string description, int value)

{

byte[] bytes = BitConverter.GetBytes(value);

string hex = BitConverter.ToString(bytes).Replace("-", string.Empty);

string txt = string.Format("{0,-30} {1,-15} 0x{2}\n",

description, value, hex);

m_stream.Write(txt);

}

//closes the file

public static void End()

{

m_stream.Close();

}

}

This very simple class just opens up a file in Begin, then provides a few utilities to write text to that file. Specifically, we’ve added a function that writes an human readable integer value along with a description and the exact hexadecimal value of its bytes.

Now let’s write a very simple program that uses it…

private void Start()

{

SyncTest.Begin("sync.txt");

AddNumbers(2, 3);

SyncTest.End();

}

int AddNumbers(int x, int y)

{

SyncTest.Write("AddNumbers");

SyncTest.Write("x", x);

SyncTest.Write("y", y);

int res = x + y;

SyncTest.Write("res", res);

return res;

}

All this does is add a couple of numbers together. However along the way we’re telling our SyncTest tool to dump out the inputs and outputs. The resulting lines of text are as follows:

AddNumbers

x 2 0x02000000

y 3 0x03000000

res 5 0x05000000

So why do this? Well the beauty is, we can now make some more modifications to our program to run the same algorithm multiple times, outputting to 2 different files:

private void Start()

{

SyncTest.Begin("sync1.txt");

RunTests();

SyncTest.End();

SyncTest.Begin("sync2.txt");

RunTests();

SyncTest.End();

}

void RunTests()

{

AddNumbers(2, 3);

}

int AddNumbers(int x, int y)

{

SyncTest.Write("AddNumbers");

SyncTest.Write("x", x);

SyncTest.Write("y", y);

int res = x + y;

SyncTest.Write("res", res);

return res;

}

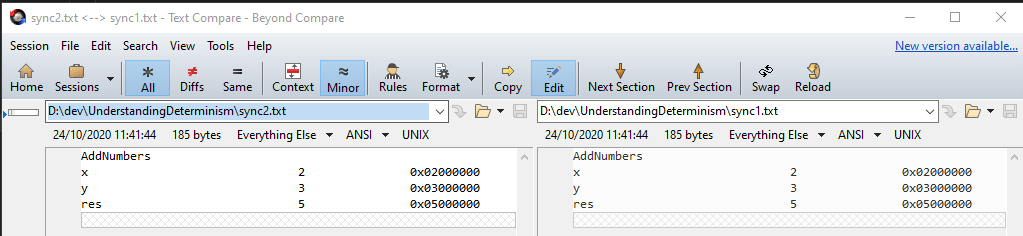

Having written the data out, we can use a simple text-comparison program to check for any differences. I use Beyond Compare for this purpose:

No differences = no indeterminism! Yay

Let’s mess with our test and introduce some indeterminsm:

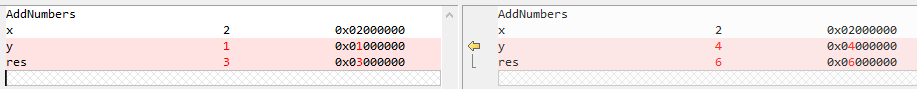

AddNumbers(2, Random.Range(0,10));

Now each run we’re going to pass a new random number in! Clearly the inputs now differ, and thus, so will our outputs. Viewing this in our logs:

Not only does our logging show the issues, but it tells where the first difference occurred (in this case the value of y was 1 in the 1st test, and 4 in the 2nd).

For this blog we’ll only be using a very simple sync tester like the one above, however with work they can be expanded to record more detailed info like stack traces, or even run as a replay in a debugger allowing you to analyse determinism problems with the full support of your programming tools!

So, armed with our determinism tester, let’s do some digging…

Floating Point Innacuracy

If you do a quick search, the first thing that gets the blame for indeterminism is floating point numbers, despite the fact that unless you’re doing cross platform stuff, they’re rarely guilty! That said, floating point precision comes up a lot, so let’s a look at a couple of examples:

- Floating point numbers can represent all integer values within a certain range precisely, however they can not represent all fractional numbers precisely.

- Some floating point operations, such as addition, will lose precision when adding together values of significantly different magnitudes

The first is pretty simple. The values 0.5, 0.25, 0.75, 0.875 can all be represented precisely by floating point numbers. This is because they can be expressed precisely as the sum of fractional powers of 2:

- 0.5 = 1/2

- 0.25 = 1/4

- 0.75 = 1/2+1/4

- 0.875 = 1/2+1/4+1/8

Other numbers however would require an infinite number of fractions of 2 to be represented this way, so can only be approximated as floating point values.

The latter point occurs because floating point numbers can only store so many (binary) digits. You could store both 1000000000000000 and 1 using a floating point number. However because they are of such drastically different size, on a 32 bit floating point unit you would find:

1000000000000000+1 = 1000000000000000

So how can a computer be both imprecise and deterministic? It’s quite simple really – a floating point unit is deterministically imprecise! It will lose precision, but it will always lose the same precision. Let’s test this by introducing some floating point numbers to our program:

float AddNumbers(float x, float y)

{

SyncTest.Write("AddNumbers (float)");

SyncTest.Write("x", x);

SyncTest.Write("y", y);

float res = x + y;

SyncTest.Write("res", res);

return res;

}

And the test…

void RunTests()

{

AddNumbers(2, 5);

AddNumbers(1f, 1000000000000f);

AddNumbers(2f/3f, 0.5f);

}

And our results…

As expected, the first floating point test has added 1 to 1e12 (1000000000000), and just ended up with 1e12 again. Similarly, attempting to add 2/3 (which ends up represented inaccurately as 0.666..67 ends up with 1.666..7. However in both these cases, the errors occur in both runs, and the floating point representations of all inputs and outputs match.

The upshot of the above is that if you’re just working with one application on one platform, the odds are floating point won’t be your problem, because any imprecision is deterministic. However, when going for cross platform determinism you can hit some real issues, as described in the following few sections.

Floating Point Hardware Behaviour

Despite the inherent determinism of a floating point unit, it still has the potential to cause problems, specifically when attempting to create an application that performs identically on different bits of hardware. For example, on No Man’s Sky I attempted (and smugly eventually succeeded!) to get PS4 GPU compute shaders to deterministically run the team’s awesome planet making algorithms, and generate exactly the same results as a PC CPU. In determinism terms, you could say that if different hardware behaves differently, then the hardware becomes an input to your algorithm and thus affects the output.

Floating point numbers have to deal with some odd scenarios such as infinities, different types of zeros, what happens when rounding things etc etc. There’s a variety of these areas in which the floating point unit has to make some form of approximation or edge case decision.

These days, IEEE standards exist that say exactly how every floating point unit on every platform should deal with every scenario. However, not all platforms follow these guidelines to the letter, and others provide low level hardware options to disable the perfect behaviour in exchange for slightly better performance.

I’ll confess to not knowing exactly how prevalent this is these days. I think it is less of an issue with modern hardware, and have myself worked with PCs (AMD+Intel), NVidia GPUs, Macs, PS4 (CPU and GPU) and found the actual hardware rarely to be the cause of indeterminism. Really the only way to be certain if you’re going cross platform though is to test it, ideally with some fairly low level C code (with FastMath disabled – see below) so you know there’s no chance of any other factors interfering.

Floating Point FastMath

A much more prevalent cause of indeterminism comes not from hardware but from compilers. Specifically optimising compilers that use a technique often referred to as FastMath. When this compiler option is turned on (which in some cases it is by default!), it allows the compiler to assume a floating point unit obeys algebraic laws perfectly, and thus mess with your code if it thinks it can express your math in a more efficient way.

Take this code for example:

float SumNumbers(float x, float y, float z, float w)

{

float res = x;

res += y;

res += z;

res += w;

return res;

}

Simple enough! Add 4 numbers together. However the optimizers amongst you may have noticed this is a particularly inefficient way of doing things as it contains 4 dependent operations. A faster version would be this:

float SumNumbers(float x, float y, float z, float w)

{

float xy = x + y;

float zw = z + w;

return xy+zw;

}

Mathematically this function does the same job, however it executes 2 independent operations then combines the results. Why this is faster doesn’t really matter and is beyond the scope of this blog. The key is, the 2 functions do the same thing, so should produce the same result.

The FastMath option tells the compiler that if it finds your SumNumbers function written the first way, it is allowed to re-write it the 2nd way, on the basis that mathematically they should return the same result.

To simulate this, I’ll extend the program as follows:

void RunTests(int test_idx)

{

float x = 0.3128364f;

float y = 0.12412124f;

float z = 0.8456272f;

float w = 0.82154819f;

if (test_idx == 0)

{

SumNumbers(x, y, z, w);

}

else

{

SumNumbersFast(x, y, z, w);

}

}

float SumNumbers(float x, float y, float z, float w)

{

SyncTest.Write("SumNumbers");

SyncTest.Write("x", x);

SyncTest.Write("y", y);

SyncTest.Write("z", z);

SyncTest.Write("w", w);

float res = x;

res += y;

res += z;

res += w;

SyncTest.Write("res", res);

return res;

}

float SumNumbersFast(float x, float y, float z, float w)

{

SyncTest.Write("SumNumbers");

SyncTest.Write("x", x);

SyncTest.Write("y", y);

SyncTest.Write("z", z);

SyncTest.Write("w", w);

float xy = x + y;

float zw = z + w;

float res = xy + zw;

SyncTest.Write("res", res);

return res;

}

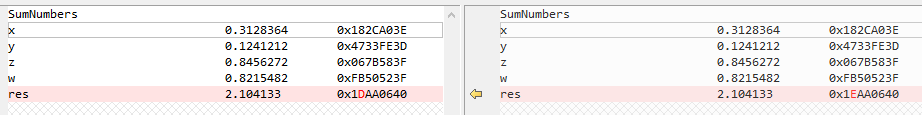

I’ve supplied my RunTests function with a test index, so I can run my first version on the first tests, and the second version on the 2nd test. Let’s see what the sync tests result in…

Shock horror! We have differences. A difference so tiny in fact that it’s not visible if you just print the value of the result to 6 decimal places. However because floating point units aren’t precise, doing the maths 2 different ways produced subtly different results. FastMath allows the compiler to choose which way to do it, and different compilers on different platforms may make different decisions. This is a very common reason for cross platform determinism issues.

For fun, let’s take the above example and add some rather contrived extra bits (note: numbers meaningless – I made em up by fiddlin!).

void RunTests(int test_idx)

{

float x = 0.3128364f;

float y = 0.12412124f;

float z = 0.8456272f;

float w = 0.82154819f;

float res;

if (test_idx == 0)

{

res = SumNumbers(x, y, z, w);

}

else

{

res = SumNumbersFast(x, y, z, w);

}

res *= 3212779;

SyncTest.Write("bigres", res);

if ( res >= 6760114.5f)

{

SyncTest.Write("Do 1 thing");

}

else

{

SyncTest.Write("Do another thing");

}

}

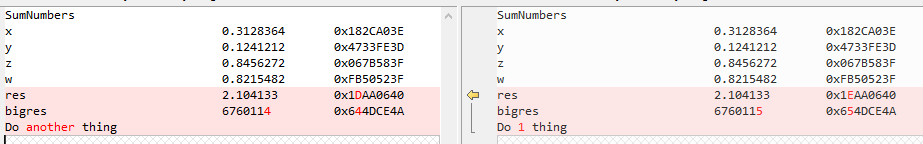

Looking at the sync results:

We can see how when plumbed through some big calculations and used in actual logical operations, these simple differences can result in dramatic changes in program behaviour. Whilst the above may seem a little contrived, applications like procedural content generators, physics engines or even full games execute large amounts of complex mathematics and branching code. All it takes is 1 single operation in that whole mess to exhibit indeterminism, and the end results could be utterly, completely different. Indeed, my first attempt on No Man’s Sky suffered similar issues and resulted in entire forests being different on PS4 (happily fixed pre-launch)! Just as with hardware, when FastMath is turned on, the compiler behaviour becomes an input because it affects the algorithm and thus the output.

As a quick side note, because FastMath is only used in optimized builds, it is often also responsible for determinism issues between debug and release versions of an app.

Trigonometry and other fancy functions

Many mathematical functions such as sin, cosin, tan, exp and pow do not exist as single instructions on hardware (or only exist on some hardware), and are instead implemented as part of maths libraries that come with whatever language you’re using. In C++ for example, this is the C standard lib, or in Unity it’s good old ‘Mathf.bla’.

Sadly, there seems to be absolutely no standardisation over the techniques or implementations that creators of these libraries use. As a result, different compilers on a given platform, or different platform, or different languages, or different versions of languages may or may not give the same results! Thus the math library implementation becomes an input to your algorithm!

As an example, I knocked together my own sin function using a minimax polynomial (no need to have any idea whatsoever what one is or how it works – that’s why we have math libraries and mathematicians!).

void SinTest(int test_idx)

{

for (int angle_degrees = 0; angle_degrees <= 90; angle_degrees++)

{

float angle_radians = angle_degrees * Mathf.Deg2Rad;

float res;

if (test_idx == 0)

{

res = Mathf.Sin(angle_radians);

}

else

{

res = MiniMaxSin(angle_radians);

}

SyncTest.Write("Sin angle", angle_degrees);

SyncTest.Write("Sin result", res);

SyncTest.Write("");

}

}

float MiniMaxSin(float x)

{

float x1 = x;

float x2 = x * x;

return

x1 * (0.99999999997884898600402426033768998f +

x2 * (-0.166666666088260696413164261885310067f +

x2 * (0.00833333072055773645376566203656709979f +

x2 * (-0.000198408328232619552901560108010257242f +

x2 * (2.75239710746326498401791551303359689e-6f -

x2 * 2.3868346521031027639830001794722295e-8f)))));

}

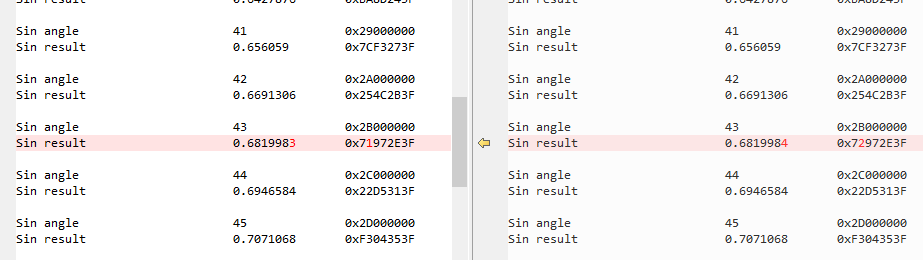

This example compares the unity ‘Mathf.Sin’ to my own version and prints out the result for 90 different angles. If we look at the sync test though, at 43 degrees we get a slightly different result…

There’s nothing wrong with either version – they both approximate the mathematical sin function to a given level of precision. But just as with our FastMath problems, because they’re implemented differently, they give slightly different results that could turn into drastic changes in behaviour over time.

If you run into this, the only 100% robust approach is to download or write your own platform agnostic math libraries, and compile them yourself with things like FastMath turned off.

The last floating point resort

All of the issues we’ve discussed so far relate to floating point numbers. If developing for one platform, it’s relatively unlikely you’ll actually hit any of them, as they’re all dependent on different hardware, compiler or software library implementations.

If you do hit them however, they can be a real headache for all sorts of reasons. Even if you were prepared to ensure hardware settings were correct, fiddle with low level compiler options and implement custom math libraries, you may well be using fundamentally different hardware (such as CPU/GPU) or libraries / engines (such as Unity) in which you don’t have control over these features.

One fairly brutal option available as a last resort is simply to limit precision by chopping off some digits! Despite various stack overflow based opinions, in certain scenarios this approach can work perfectly well:

float LoseSomePrecision(float x)

{

x = x * 65536f;

x = Mathf.Round(x);

x = x / 65536f;

return x;

}

Here we simply:

- Multiply the floating point by a power of 2 constant

- Round it to an integer

- Divide the result by the same power of 2 constant

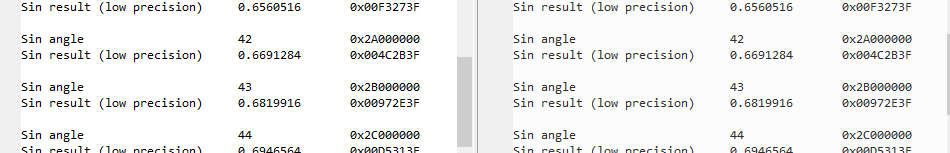

After plugging it in to our sin tests:

We can see how chopping off a few bits of precision has made both tests consistent (though less accurate).

Being a decimal society, it might have been tempting to just multiply by 1000000 and then divide by 1000000. However, floating point numbers work with binary, so if we want to actually reduce the number of bits required to represent our value, we must use a power of 2.

Of course, this technique won’t help you if your precompiled physics engine isn’t deterministic cross platform. In that situation you’ve really only got one option – don’t use the precompiled physics engine. But if after digging you’re finding it’s just a few math ops that throw the whole system off, limiting precision can be the solution.

Summary

In this post we’ve mainly looked at the low level issues that can crop up when dealing with floating point numbers, and how when dealing with multiple platforms or compilers, inconsistencies in low level behaviour can cause some real headaches.

In reality, variation in floating point behaviour is only likely to crop up when comparing multiple compilers / platforms. More often than not it’s issues with the application implementation (or that of libraries/engines it depends on) that result in determinsm problems. In the next post we’ll look at the basics of Application level determinism and one of the big culprits – global state.